Latest news about Bitcoin and all cryptocurrencies. Your daily crypto news habit.

Stream is an API that enables developers to build news feeds and activity streams (try the API). We are used by over 500 companies and power the feeds of more than 300 million end users. Companies such as Product Hunt, Under Armour, Powerschool, Bandsintown, Dubsmash, Compass and Fabric (Google) rely on Stream to power their news feeds. In addition to the API, the founders of Stream also wrote the most widely used open source solution for building scalable feeds.

Here’s what Stream looks like today:

- Number of servers: 180

- Feed updates per month: 34 billion

- Average API response time: 12ms

- Average real-time response time: 2ms

- Regions: 4 (US-East, EU-West, Tokyo and Singapore)

- 1B API requests per month (~20k/minute)

Given that most of our customers are engineers, we often talk about our stack. Here’s a high level overview:

- Go is our primary programming language.

- We use a custom solution built on top of RocksDB + Raft for our primary database (we started out on Cassandra but wanted more control over performance). PostgreSQL stores configs, API keys, etc.

- OpenTracing and Jaeger handle tracing, StatsD and Grafana provide detailed monitoring and we use the ELK stack for centralized logging.

- Python is our language of choice for machine learning, devops and our website https://getstream.io (Django, DRF & React).

- Stream uses a combination of fanout-on-write and fanout-on-read. This results in fast read performance when a users open their feed, as well as fast propagation times when a famous user posts an update.

Tommaso Barguli and I (@tschellenbach) are the developers who started Stream nearly 3 years ago. We founded the company in Amsterdam, participated in Techstars NYC 2015 and opened up our Boulder, Colorado office in 2016. It’s been quite a crazy ride in a fairly short amount of time! With over 15 developers and a host of supporting roles, including sales and marketing, the team feels enormous compared to the early days.

The Challenge: News Feeds & Activity Streams

The nature of follow relationships makes it hard to scale feeds. Most of you will remember Facebook’s long load times, Twitter’s fail whale or Tumblr’s year of technical debt. Feeds are hard to scale since there is no clear way to shard the data. Follow relationships connect everyone to everyone else. This makes it difficult to split data across multiple machines. If you want to learn more about this problem, check out these papers:

- Twitter’s tech back in the days with 150 million active users

- LinkedIn’s ranked feeds

- Yahoo/Princeton research paper

At a very high level there are 3 different ways to scale your feeds:

- Fanout-on-write: basically precompute everything. It’s expensive, but its easy to shard the data.

- Fanout-on-read: hard to scale, but more affordable and new activities show up faster.

- Combination of the above two approaches: better performance and reduced latency, but increased code complexity.

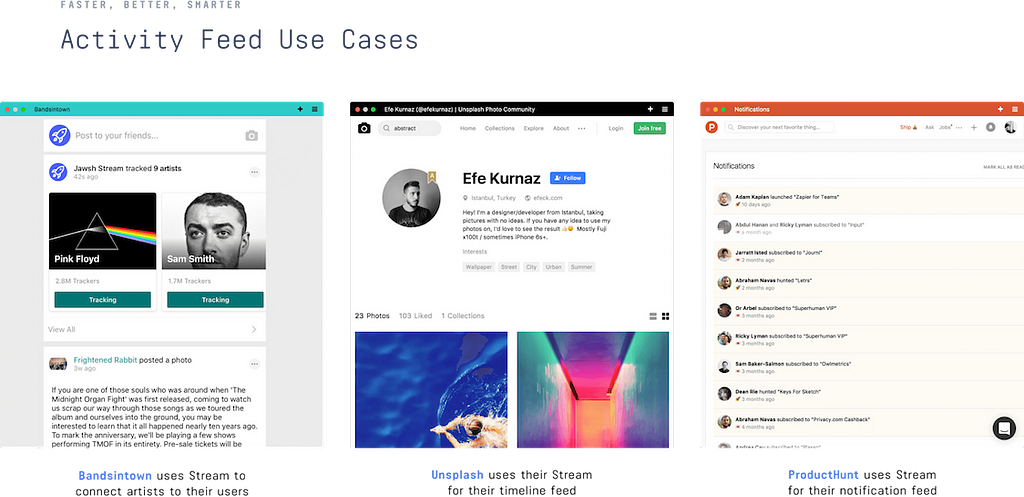

Stream uses a combination of fanout-on-write and fanout-on-read. This allows us to effectively support both customers with highly connected graphs, as well as customers with a more sparse dataset. This is important since the ways in which our customers use Stream are very different. Have a look at these screenshots from Bandsintown, Unsplash, and Product Hunt:

Switching from Python to Go

After years of optimizing our existing feed technology we decided to make a larger leap with 2.0 of Stream. While the first iteration of Stream was powered by Python and Cassandra, for Stream 2.0 of our infrastructure we switched to Go. The main reason why we switched from Python to Go is performance. Certain features of Stream such as aggregation, ranking and serialization were very difficult to speed up using Python.

We’ve been using Go since March 2017 and it’s been a great experience so far. Go has greatly increased the productivity of our development team. Not only has it improved the speed at which we develop, it’s also 30x faster for many components of Stream.

The performance of Go greatly influenced our architecture in a positive way. With Python we often found ourselves delegating logic to the database layer purely for performance reasons. The high performance of Go gave us more flexibility in terms of architecture. This led to a huge simplification of our infrastructure and a dramatic improvement of latency. For instance, we saw a 10 to 1 reduction in web-server count thanks to the lower memory and CPU usage for the same number of requests.

Initially we struggled a bit with package management for Go. However, using Dep together with the VG package contributed to creating a great workflow.

If you’ve never tried Go, you’ll want to try this online tour: https://tour.golang.org/welcome/1

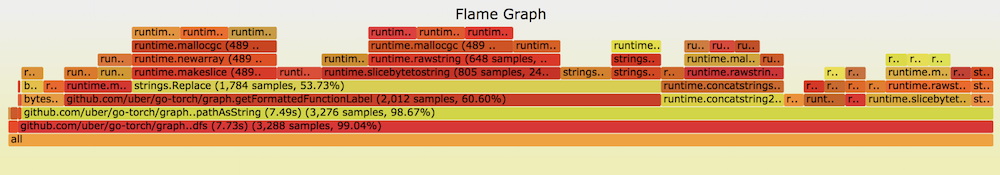

Go as a language is heavily focused on performance. The built-in PPROF tool is amazing for finding performance issues. Uber’s Go-Torch library is great for visualizing data from PPROF and will be bundled in PPROF in Go 1.10.

Switching from Cassandra to RocksDB & Raft

1.0 of Stream leveraged Cassandra for storing the feed. Cassandra is a common choice for building feeds. Instagram, for instance started, out with Redis but eventually switched to Cassandra to handle their rapid usage growth. Cassandra can handle write heavy workloads very efficiently.

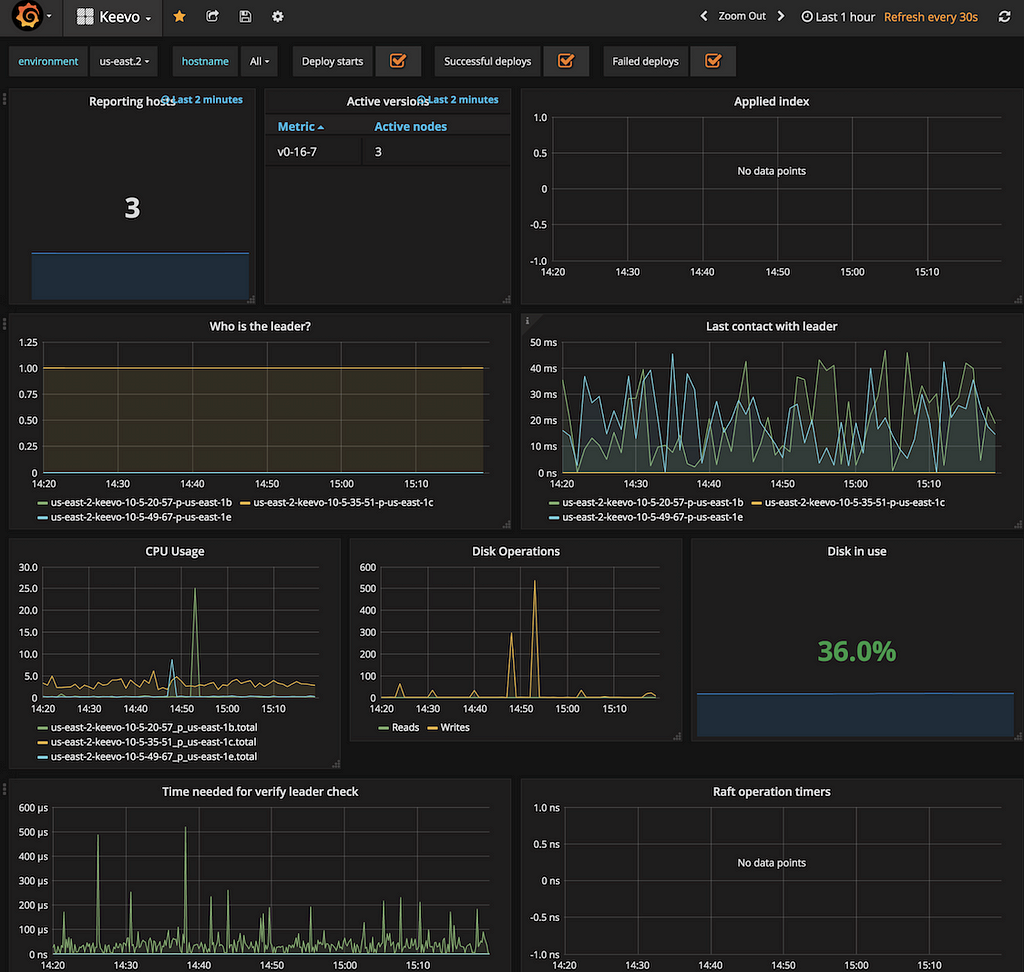

Cassandra is a great tool that allows you to scale write capacity simply by adding more nodes, though it is also very complex. This complexity made it hard to diagnose performance fluctuations. Even though we had years of experience with running Cassandra, it still felt like a bit of a black box. When building Stream 2.0 we decided to go for a different approach and build Keevo. Keevo is our in-house key-value store built upon RocksDB, gRPC and Raft.

RocksDB is a highly performant embeddable database library developed and maintained by Facebook’s data engineering team. RocksDB started as a fork of Google’s LevelDB that introduced several performance improvements for SSD. Nowadays RocksDB is a project on its own and is under active development. It is written in C++ and it’s fast. Have a look at how this benchmark handles 7 million QPS. In terms of technology it’s much more simple than Cassandra. This translates into reduced maintenance overhead, improved performance and, most importantly, more consistent performance. It’s interesting to note that LinkedIn also uses RocksDB for their feed.

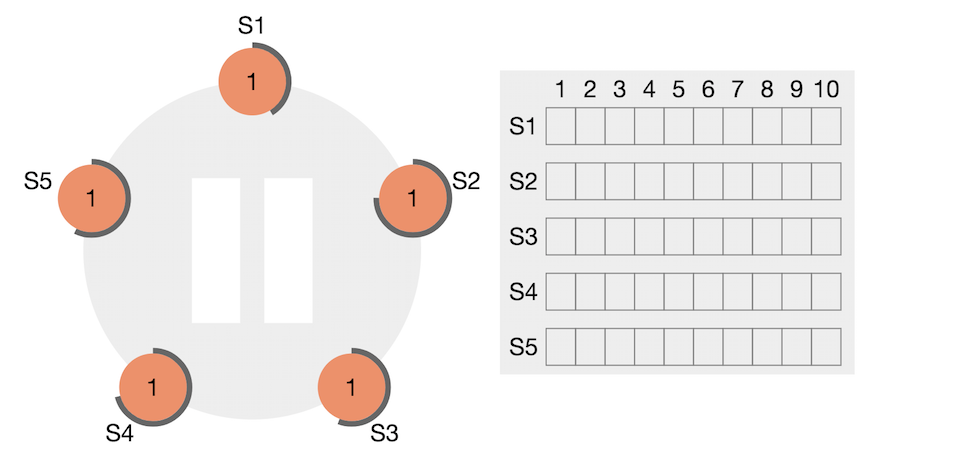

Our infrastructure is hosted on AWS and is designed to survive entire availability zone outages. Unlike Cassandra, Keevo clusters organizes nodes into leaders and followers . When a leader (master) node becomes unavailable the other nodes in the same deployment will start an election and pick a new leader. Electing a new leader is a fast operation and barely impacts live traffic.

To do this, Keevo implements the Raft consensus algorithm using Hashicorp’s Go implementation. This ensures that every bit stored in Keevo is stored on 3 different servers and operations are always consistent. This site does a great job of visualizing how Raft works: https://raft.github.io/

Not Quite Microservices

By leveraging Go and RocksDB we’re able to achieve great feed performance. The average response time is around 12ms. The architecture lies somewhere between a monolith and a microservice. Stream runs on the following 7 services:

- Stream API

- Keevo

- Real time & Firehose

- Analytics

- Personalization & Machine learning

- Site & Dashboard

- Async Workers

To see all our services divided into stacks, head over here.

Personalization & Machine Learning

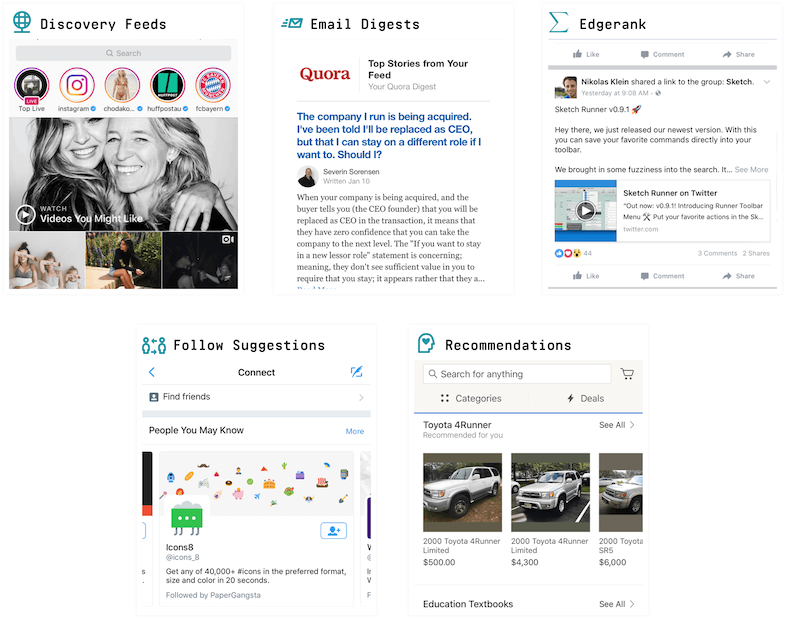

Almost all large apps with feeds use machine learning and personalization. For instance, LinkedIn prioritizes the items in your feed. Instagram’s explore feed displays pictures outside of the people you follow that you might be interested in. Etsy uses a similar approach to optimize ecommerce conversion. Stream supports the following 5 use cases for personalization:

Documentation for building personalized feeds.

All of these personalization use cases rely on combining feeds with analytics and machine learning. For the machine learning side we generate the models using Python. The models are different for each of our enterprise customers. Typically we’ll use one of these amazing libraries:

- LightFM — How to implement recommender systems

- XGBoost

- Scikit learn

- Numpy, Pandas and Dask

- Jupyter Notebook (For development)

- Mesa Framework

Analytics

Analytics data is collected using a tiny Go-based server. In the background, it will spawn go-routines to rollup the data as needed. The resulting metrics are stored in Elastic. In the past, we looked at Druid, which seems like a solid project. For now, we could get away with a simpler solution though.

Dashboard & Site

The dashboard is powered by React and Redux. We also use React and Redux for all of our example applications:

- Cabin: A tutorial series on building a social network with React & Redux

- Winds: Open source RSS & Podcast app (Winds 2.0 is coming out very soon)

The site, as well as the API for the site, is powered by Python, Django and Django Rest Framework. Stream is sponsoring Django Rest Framework since it’s a pretty great open source project. If you need to build an API quickly there is no better tool than DRF and Python.

We use Imgix to resize the images on our site. For us, Imgix is cost efficient, fast and overall a great service. Thumbor is a good open source alternative.

Real time

Our real time infrastructure is based on Go, Redis and the excellent gorilla websocket library. It implements the Bayeux protocol. In terms of architecture it’s very similar to the node based Faye library.

It was interesting to read the “Ditching Go for Node.js” post on Hacker News. The author moves from Go to Node to improve performance. We actually did the exact opposite and moved from Node to Go for our real time system. The new Go-based infrastructure handles 8x the traffic per node.

Devops, Testing & Multiple Regions

In terms of devops the provisioning and configuration of instances is fully automated using a combination of:

- CloudFormation

- Cloud-Init

- Puppet

- Boto & Fabric

Because our infrastructure is defined in code it has become trivial to launch new regions. We heavily use CloudFormation. Every single piece of our stack is defined in a CloudFormation template. If needed we are able to spawn a new dedicated shard in a few minutes. In addition, AWS Parameter Store is used to hold application settings. Our largest deployment is in US-East, but we also have regions in Tokyo, Singapore and Dublin.

A combination of Puppet and Cloud-init is used to configure our instances. We run our self-contained Go’s binaries directly on the EC2 instance without any additional containerization layer.

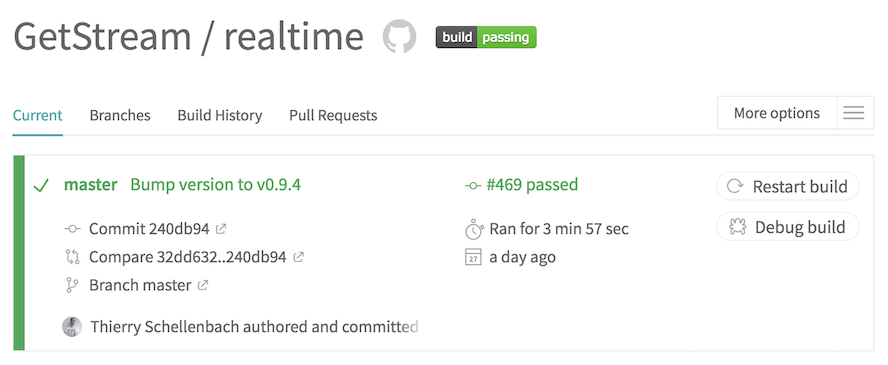

Releasing new versions of our services is done by Travis. Travis first runs our test suite. Once it passes, it publishes a new release binary to GitHub. Common tasks such as installing dependencies for the Go project, or building a binary are automated using plain old Makefiles. (We know, crazy old school, right?) Our binaries are compressed using UPX.

Tool highlight: Travis

Travis has come a long way over the past years. I used to prefer Jenkins in some cases since it was easier to debug broken builds. With the addition of the aptly named “debug build” button, Travis is now the clear winner. It’s easy to use and free for open source, with no need to maintain anything.

Next we use Fabric to do a rolling deploy to our AWS instances. If anything goes wrong during the deploy it will halt the deploy. We take stability very seriously:

- A high level of test coverage is required.

- Releases are created by Travis (making it hard to deploy without running tests).

- Code is reviewed by at least 2 team members.

- Our extensive QA integration test suite evaluates if all 7 components still work.

We’ve written about our experience with testing our Go codebase. When things do break we do our best to be transparent about the ongoing issue:

- StatusPage

- Twilio for 24/7 phone support

- VictorOps for notifying our team

- #firefighting channel on Slack

- Intercom for customer support

VictorOps is a recent addition to our support stack. It’s made it very easy to collaborate on ongoing issues.

Tool Highlight: VictorOps

The best part about VictorOps is how they use a timeline to collaborate amongst team members. VictorOps is an elegant way to keep our team in the loop about outages. It also integrates well with Slack. This setup enables us to quickly react to any problems that make it into production, work together and resolve them faster.

The vast majority of our infrastructure runs on AWS:

- ELB for load balancing

- RDS for reliable Postgres hosting

- ElastiCache for Redis hosting

- Route53 for our DNS

- Cloudfront for our CDN

- AWS ElasticSearch for ELK and tracing with Jaeger

The devops responsibilities are shared across our team. While we do have one dedicated devops engineer, all our developers have to understand and own the entire workflow.

Monitoring

Stream uses OpenTracing for tracing and Grafana for beautiful dashboards. The tracking for Grafana is done using StatsD. The end result is this beauty:

We track our errors in Sentry and use the ELK stack to centralize our logs.

Tool Highlight: OpenTracing + Jaegar

One new addition to the stack is OpenTracing. In the past we used New Relic, which works like a charm for Python, but isn’t able to automatically measure tracing information for Go. OpenTracing with Jaeger is a great solution that works very well for Stream. It also has, perhaps, the best logo for a tracing solution:

Closing Thoughts

Go is an absolutely amazing language and has been a major win in terms of performance and developer productivity. For tracing we use OpenTracing and Jaeger. Our monitoring is running on StatsD and Graphite. Centralized logging is handled by the ELK stack.

Stream’s main database is a custom solution built on top of RocksDB and Raft. In the past we used Cassandra, which we found hard to maintain and which didn’t give us enough control over performance when compared to RocksDB.

We leverage external tools and solutions for everything that’s not a core competence. Redis hosting is handled by ElastiCache, Postgres by RDS, email by Mailgun, test builds by Travis and error reporting by Sentry.

Thank you for reading about our stack! If you’re a user of Stream, please be sure to add Stream to your stack on StackShare. If you’re a talented individual, come work with us! And finally, if you haven’t tried out Stream yet, take a look at this quick tutorial for the API.

This post was originally written by Thierry Schellenbach, CEO at GetStream.io. The original post can be found at https://stackshare.io/stream/stream-and-go-news-feeds-for-over-300-million-end-users.

Stream & Go: News Feeds for Over 300 Million End Users was originally published in Hacker Noon on Medium, where people are continuing the conversation by highlighting and responding to this story.

Disclaimer

The views and opinions expressed in this article are solely those of the authors and do not reflect the views of Bitcoin Insider. Every investment and trading move involves risk - this is especially true for cryptocurrencies given their volatility. We strongly advise our readers to conduct their own research when making a decision.